Hi Westa,

was travelling and had no time until now to look into it.

This is the section for the realsense in nuitrack.config:

"Realsense2Module": {

"Depth": {

"ProcessMaxDepth": 5000,

"RawWidth" : 848,

"RawHeight" : 480,

"ProcessWidth": 848,

"ProcessHeight": 480,

"FPS": 90,

"Preset": 5,

"PostProcessing": {

"SpatialFilter": {

"spatial_iter": 0,

"spatial_alpha": 0.5,

"spatial_delta": 20

},

"DownsampleFactor": 1

},

"LaserPower": 1.0

},

"FileRecord": "",

"Depth2ColorRegistration": true,

"RGB": {

"RawWidth" : 1920,

"RawHeight" : 1080,

"ProcessWidth": 1920,

"ProcessHeight": 1080

}

},

so I assume that my raw and processed dimesions are the same with 848x480.

848x480 = 407 040 points

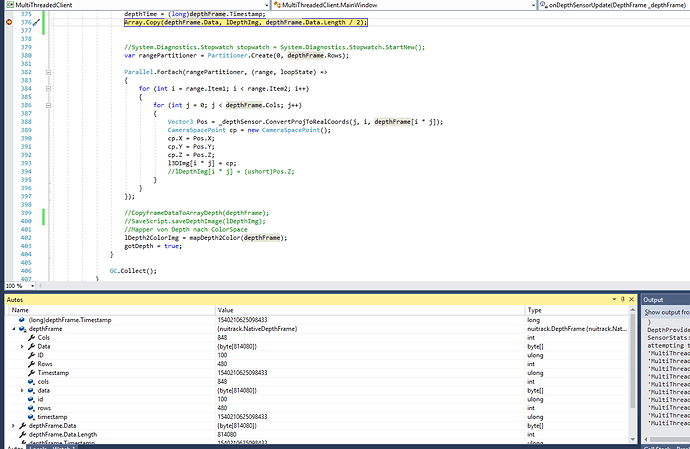

Now in my Code I copy the data from depthframe into a new array, but the depthframe.data array is twice as long as expected -> 814080 points (see screenshot).

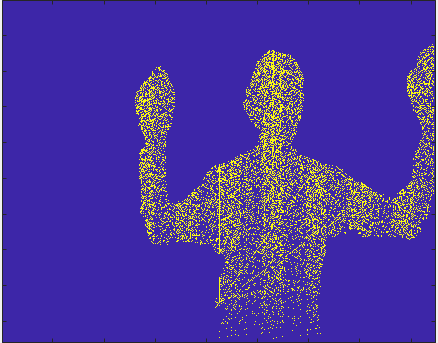

If I take only the first half of this data array and save it as a Picture I get the results from the previous post.

Data Copy:

//create new byte array

private ushort[] lDepthImg = new ushort[848x480];

private void onDepthSensorUpdate(DepthFrame _depthFrame)

{

// DepthStream

if (true)

{

depthFrame = _depthFrame;

if (depthFrame != null)

{

depthTime = (long)depthFrame.Timestamp;

//Copy data to new array

CopyFrameDataToArrayDepth(depthFrame);

//Save a Picture

SaveScript.saveDepthImage(lDepthImg);

}

}

}

void CopyFrameDataToArrayDepth(DepthFrame frame)

{

//fast and efficient way to copy the data

var rangePartitioner = Partitioner.Create(0, depthFrame.Rows);

Parallel.ForEach(rangePartitioner, (range, loopState) =>

{

for (int i = range.Item1; i < range.Item2; i++)

{

for (int j = 0; j < frame.Cols; j++)

{

lDepthImg[i * j] = frame[i * j];

}

}

});

}

save as Picture:

public static void saveDepthImage(ushort[] depthValues)

{

byte[] tranformedDepthImage = TransformDepthImageArray(depthValues, imageType.depthImage);

saveImage(Environment.GetFolderPath(Environment.SpecialFolder.MyPictures) + @"\RealsenseTest

\depthImage" + savedImagesCounter + ".png", tranformedDepthImage, depthImageWidth,

depthImageHeight, imageType.depthImage);

savedImagesCounter++;

}

private static byte[] TransformDepthImageArray(ushort[] rawData, imageType imageType)

{

byte[] result = new byte[depthImageHeight * depthImageWidth * 4];

int currentIndex = 0;

foreach (int depth in rawData)

{

byte color = (byte)(depth * 255 / 5000);

result[currentIndex++] = (byte)(255 * color) ;

result[currentIndex++] = (byte)(255 * color);

result[currentIndex++] = 0;

result[currentIndex++] = 255;

}

return result;

}

I also tried another approach where I use Array.Copy

// Divided by 2 because of double length of depthFrame.Data array

Array.Copy(depthFrame.Data, lDepthImg, depthFrame.Data.Length / 2);

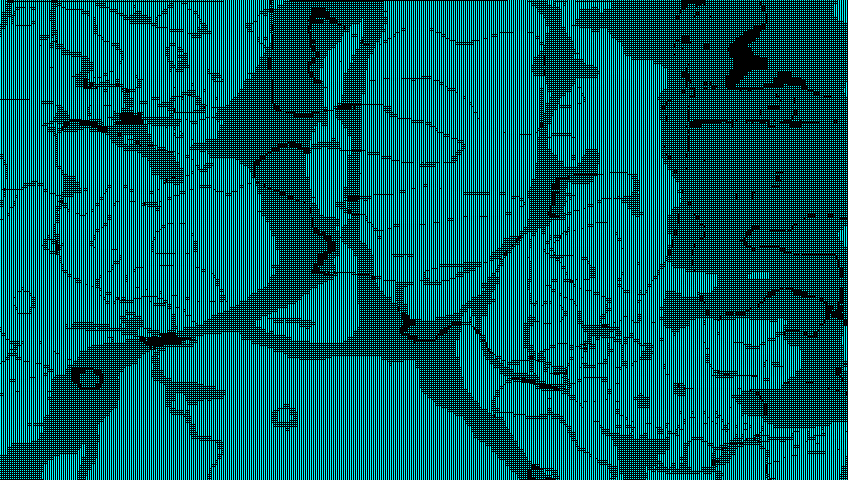

but then I get something like this:

Thanks for looking into this.

Best,

Björn