Hello,

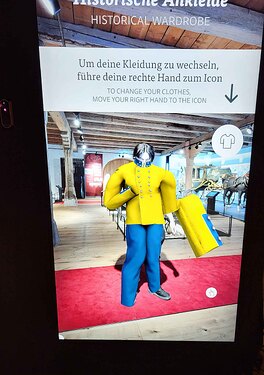

I’m developing an Unity application that is something like the Virtual Fitting Room, where a user can try on different outfits and look at himself on a big display that operates as mirror.

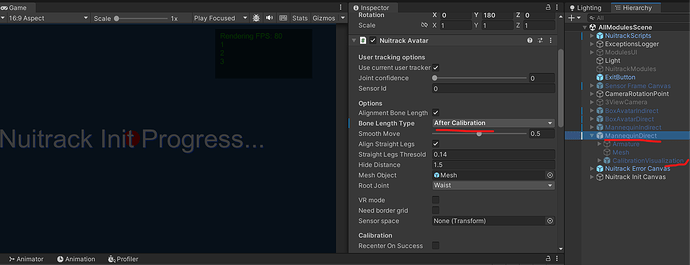

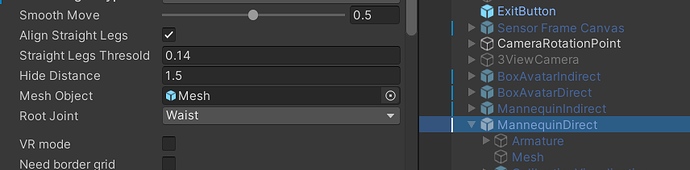

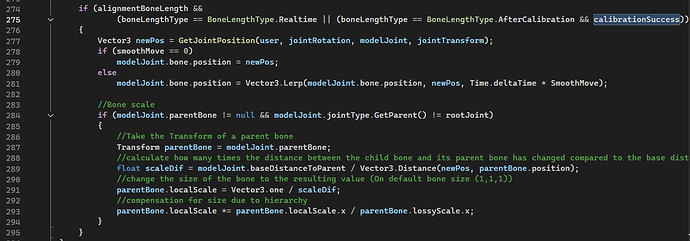

I’m facing problems with the Nuitrack Avatar component, especially when it comes to the “Alignment Bone Length” option. Since users of different body height can interact with the app (from kids to tall adults), the outits should scale accordingly and as far as I understand said option should allow this.

I’m trying to describe the problems as best as I can (when using Nuitrack Avatar with Alignment Bone Length and Bone Length Type Realtime):

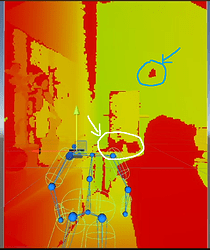

- Jittering of Bones/Joints during movement: When I’m moving around with the outfit tracked on me, especially when I’m moving forward and backward, some bones/joints are jittering and shaking/jumping around. Not very far, only circa an arm length and not all joints the same amount. The area around right arm/shoulder is often shaking the most. As soon as I turn off “Alignment Bone Length” this behaviour stops.

- Wrong rescaling of joints: On my own models, as well as sometimes the demo models, I also have the problem, that the mesh is not rescaling correctly. For example, some body parts are getting very small and some parts - especially neck and head - get extremely big. For example, if I look at the Transform component of the neck joint on my own models, the scale gets set to 2-3, which makes the head extremely big. Similar things happen to other body parts.

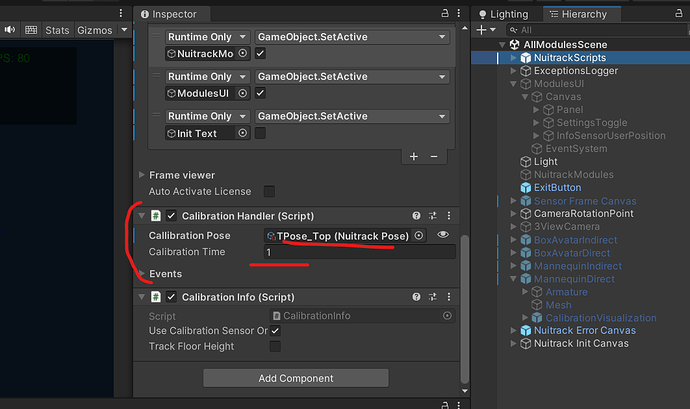

I tried these things in various scenes, including my own scene, the Virtual Fitting Room Tutorial and the All Modules Scene.

My skeleton seems to be tracked correctly, for example if I run the Tutorials/RGBandSkeletons/, there my skeleton is tracked correctly. Without the Alignment Bone Length option, the tracking is also working mostly fine.

The jittering is happening everywhere, even in the example/demo scenes of the NuitrackSDK. The wrong rescaling is partially also happening in for example the All Modules Scene. There, the body of the mannequin is also a bit weirdly stretched. It’s not as extreme as in my own scene with my own models though, but it’s stil also happening there.

My system configuration is as following:

- Windows 11

- Sensor: Orbbec Femto Bolt

- Sensor orientation: Portrait and mirror

- Unity 6000.0.51f1

- Nuitrack SDK v0.38.4

- NuitrackSDK.unitypackage v0.38.4

- OrbbecSDK v1.10.18

- OrbbecViewer v1.10.2

- Firmware Update sensor FemtoBolt_v1.1.2

I adjusted the nuitrack.config (RGB Width, Height and FPS) since I wasn’t able to set the correct custom resolution for portrait mode via the Nuitrack Manager in Unity. But it works with my adjustments in the nuitrack.config. I don’t know if this could correlate with my problems, so I’m just adding this here as info.

"OrbbecSDKDepthProviderModule": {

"Depth": {

"AutoExposure": true

},

"RGB": {

"Width": 3840,

"Height": 2160,

"FPS": 30,

"AutoExposure": true

}

}

So my questions would be:

- Where could the jittering come from? It’s weird that it also happens in the SDK’s example scenes.

- If there’s no direct solution to the jittering, is there maybe a way to “smooth” this behaviour?

- What could cause the incorrect rescaling of the meshes and bone joints when using Alignment Bone Length? I made sure to set up the bones and the distances between them in the model as close to the Avatar map in the Nuitrack Avatar component as possible.

- Is there maybe a different approach to the rescaling of the bones to fit different people’s heights? One that is not using Alignment Bone Length? Or can we ignore the scale and just use the position to stretch the model?

- Do you maybe have some more tips for modeling / setting up bones correctly that I might have missed?

I hope, my informations are a good base, if you need more insight, please let me know.

Also an additional question:

- Is there currently a possiblity to read head joint rotation and move the head accordingly? Since some outfits also include hats and currently they’re not rotating correctly. I read that there’s currently no way to achieve this, but maybe there are some news or workarounds.

Thanks in advance!